All Scientific Verification Becomes Replication

The old ways of peer-review, PDFs, prestige, and citations won't survive AI.

Our scientific research complex is in for a reckoning in the midst of AI. The old ways of publishing PDFs and judging quality with peer-review and prestige are nearing end of life. They have failed to evolve for today’s world of computation and automation, and AI will expire whatever usefulness they have left.

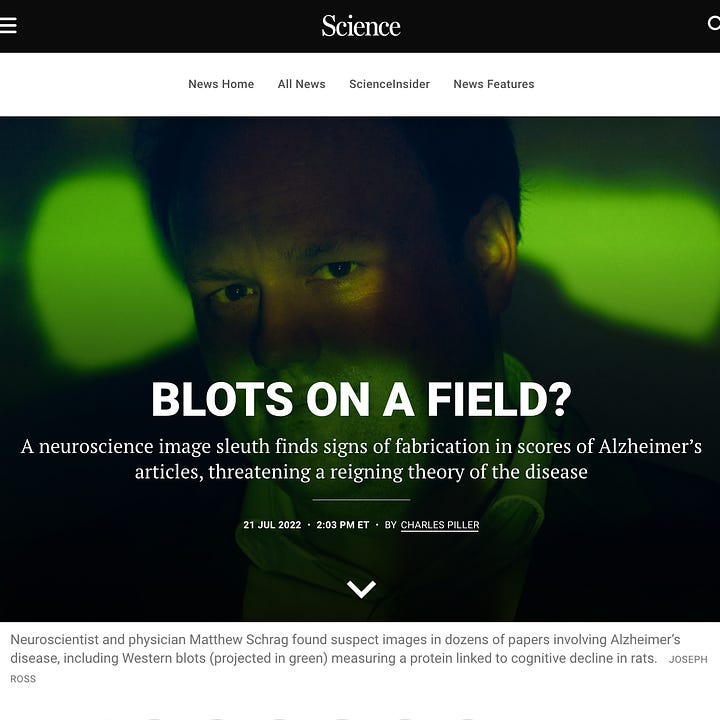

What’s coming isn’t merely a wave of generative write-ups and AI-assisted review as some will have you believe, but a tsunami of fraudulent papers that’ll wash away all remaining rigour in our current scientific complex.

The only form of scientific verification that’ll remain standing when the tides recede will be independent replication—the only true test of scientific validity.

Fraud, By The Millions

AI will soon become world-class at generating fake, but plausible-sounding papers. Fake methods, results, charts, diagrams, code, data, everything; indiscernible from real research.

Totally plausible fake papers by the millions, indistinguishable from "the real thing", coming shortly.

— Marc Andreessen, X

Every scientist will have the power in their hands to produce all the fake revolutionary papers they want, by the thousands. Many won’t choose to use that power, but malicious actors will abuse it. They will pollute the scientific record for their own self-progression and preservation, as has already been happening for decades.

The weight of a million fraudulent papers will crumble all respect for the old indicators of quality.

Peer-Review gets DDoS’ed

All the fake papers that flood the research ecosystem will DDoS peer-review with falsities. Journals won’t know what to do.

Peer-review is already well in decline, fraud consistently slips through its walls. It couldn’t even catch bogus work 5 years ago, and it most certainly won’t be able to catch tomorrow’s advanced AI.

Human reviewers won’t be able to tell the difference between real and fake papers, especially when authors can hide their code and data from critique. Some suppose AI reviewers will do a better job—an academic ouroboros of sorts—but LLMs will only be as good as their internal representations of reality, they won’t be perfect.1

Scientific fraud will get supercharged by AI, and peer-review won’t catch shit.

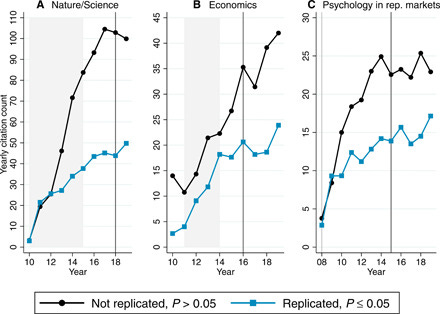

Prestige and Citations Won’t Correlate With Correctness

Prestige and citations won’t be enough to differentiate truth from falsities. Citations are just a popularity metric, not a signal of truth. Prestige is no better, it’s merely a proxy for quality.

Citations became broadly adopted in the pre-Internet world because a retrospective analysis correlated high citations with high quality, high impact research.

But today the exact opposite is true. High citations can correlate with fraud. So, citations won’t be useful in identifying real research in the coming flood of fraud. They won’t matter when you can fabricate hundreds of citations with fake papers.

Prestige will only persist to serve the established scientific oligarchy.

When we’re faced with millions of potentially fraudulent papers, it will be tempting to rely on established impact factors and prestige to identify signal. We’ll be told to trust papers from authors with good track records—denoted by their high citations and prestige.2

It’s this regression back to the old markers of excellence that’ll be fraught with error, because prestige and citations themselves are far from perfect.

We have very notable examples of how badly these proxies for quality have led us astray, and people have been correctly calibrated to distrust the authority of the ruling class.

Efforts to solidify prestige and citations as indicators of truth will fail to resonate beyond the most devoted disciples of the established elite. People will just turn away.

But amidst that collapse in trust and signal, we’ll need a better way to identify truth.

Verify Against Ground Truth

How do we know something to be true? What exists in the realm of objective truth?

Truth is whatever reality says is true. Reality is—in a sense—the ultimate source of truth. It’s what we perform our scientific experiments against. We ask reality questions and it responds with answers.

Independent replication is verification against ground truth, we re-run experiments against reality and see what answers come up.

It’s verification against ground truth that’s robust to AI. No matter how many fake papers AI can create, and no matter how well it can manipulate data, as long as we can replicate an experiment and verify it against reality, we can know whether it’s legit or not.

As long as we can replicate results, it’s legit.

LK-99 was a glimpse into this future, where we discard all notions of prestige, peer-review and citations in the pursuit of replication. Nothing mattered except replication.

As the old indicators of quality lose their usefulness, independent replication will emerge as the only effective way to reliably differentiate fake and real research.

Replication will soon be the only remaining test of scientific validity.3

Defence Against Disruption

These changes are going to come fast and hard, and they will catch a lot of people off-guard. Which is why we should work to defend against them now.

So, what can we do about the coming flood of fraud? Unfortunately, or fortunately (depending on your perspective), the existing academic complex of journals and institutions aren’t going to adjust well to AI, they’re more likely to just ban it.

It’s up to innovators at the frontier to come up with and scale solutions.

The three solutions I see as being the most impactful are:

Cheaper Replication

Prediction Markets for Replicability

Transparent and Robust Publishing

I’m independently either working on, supporting, or evangelizing each one.

If you’re interested in getting involved, reach out.

With that said, let’s go through each.

1. Cheaper Replication

This is a longer-term defence, but there are two major trends that have the potential to drastically reduce the cost of replication: robotic automation and low-cost energy.

Both are in development today, in the form of humanoid robots, the mass-installation of solar, and perhaps nuclear energy. We should ride these trends to drive down experimentation and replication costs, automating wherever we can to improve our capacity to replicate research.

2. Prediction Markets for Replicability

Prediction markets are not a new idea, but a good implementation may be newly feasible with crypto.

LK-99 showed the potential. We’d leverage the power of free markets to determine an estimate of how replicable results are, that scientists and the public can bet on.

It’d be a useful indicator for research that takes significant resources to replicate—a backup of sorts, for when and where we don’t have information on replications.

3. Transparent and Robust Publishing

I’ve written in detail about this already, but PDFs clearly don’t contain enough information and rigour for us to validate research.

We must solidify the scientific information supply chain. That means more information, and better tools to discern the legitimacy of that information.

The first part is to maximize the amount of useful information that’s published—code, data, videos, images, machine artifacts; anything that can increase our confidence that the information is real and not faked by AI.

Second is to back that information with sufficient defence against manipulation, and that means hashing metadata onto blockchains — battle-tested ledgers that have more than a decade-long track record of preventing manipulation of information.

Taken together, it means researchers will have to share more of their research artifacts, and publish them on-chain for immutability.

Onwards…

Whatever happens, our academic research complex is in for quite a disruption.

The old ways of PDFs, peer-review, prestige, and citations will die a glorious death amidst a jubilee of fraud. Only the truest test of scientific rigour—independent replication—will remain standing amid the chaos.

There is work to be done to successfully preserve the integrity of science. We must prioritize replication and lower costs, increase the visibility of information on replicability, and solidify how we share and publish scientific information.

Anything less, and it will become a lot harder to discern truth in scientific research . . .

If we had LLM reviewers in the 1600s, would Galileo’s heliocentric model have been rejected or approved? It would’ve likely been rejected. Heliocentrism would’ve conflicted with the existing world-views encoded in the LLM. Revolutionary developments in science are by definition non-consensus, and LLMs aren’t equipped to “accept” drastic divergence from the status-quo.

Young researchers are already coerced into stamping the name of an older, reputable researcher onto their own papers, just to signal legitimacy and increase the likelihood of prestigious publication — expect that to intensify.

Yes, it will be very resource intensive to replicate all this research — which is why I propose three potential solutions to alleviate the problem.

The other thing worth noting, however, is that the cost to replicate will likely fall dramatically over the coming decades with robotic automation, and low-cost energy. I will have a longer form article on this soon…